Four Seasons of AI: A Brief History

A journey through the history of artificial intelligence, from its optimistic beginnings in the 1950s through AI winters and resurgences, to the modern era of deep learning and large language models.

Antony Lu

Few fields in science have experienced such dramatic swings between euphoria and despair as artificial intelligence. Since the mid-twentieth century, AI has moved through recurring cycles — bursts of optimism where researchers predicted human-level machines were just years away, followed by painful periods of disillusionment, funding cuts, and public skepticism. These phases have come to be known as “AI springs,” “AI summers,” and “AI winters.” Understanding them is not merely an exercise in nostalgia. The patterns of overpromise and correction that shaped the field’s past continue to inform how we develop, fund, and talk about AI today. This is the story of those seasons.

AI Spring: The Birth of AI

Long before the term “artificial intelligence” existed, thinkers on both sides of the Atlantic were laying its intellectual foundations — sometimes in fiction, sometimes in wartime secrecy, and sometimes with nothing more than pen and paper.

The United States: Asimov and the Dream of Thinking Machines

In 1942, Isaac Asimov published the short story Runaround, introducing his famous Three Laws of Robotics — a set of ethical rules governing the behavior of intelligent machines. Although pinpointing the exact roots of AI is difficult, Asimov’s fiction proved remarkably influential. His stories gave scientists and engineers a shared vocabulary for thinking about machine autonomy, moral reasoning, and the relationship between humans and their creations. Generations of researchers would later cite Asimov as the spark that first drew them toward the field.

The United Kingdom: Alan Turing and the Foundations of Computing

Across the Atlantic, the groundwork for AI was being laid in a far more urgent context. During the Second World War, the British mathematician Alan Turing developed “The Bombe,” an electromechanical device used to decipher messages encrypted by the German Enigma machine. Turing’s wartime work demonstrated that complex reasoning tasks could, in principle, be mechanized. In 1950, he published the landmark paper Computing Machinery and Intelligence, in which he posed the deceptively simple question: “Can machines think?” He proposed what is now known as the Turing Test — the idea that a machine could be considered intelligent if a human interrogator could not reliably distinguish its responses from those of another person. Turing is widely regarded as the father of both theoretical computer science and artificial intelligence.

Sweden: Arne Beurling and the Art of Codebreaking

Sweden contributed its own quiet genius. The mathematician Arne Beurling managed to decipher the encrypted teleprinter traffic of Nazi Germany — in just two weeks, using nothing but pen and paper. His feat was one of the great intellectual achievements of the war, and it demonstrated the kind of pattern recognition and abstract reasoning that AI researchers would later try to replicate in machines. When colleagues asked Beurling how he had done it, he offered only a cryptic reply: “A magician does not reveal his secrets.”

The Dartmouth Conference: AI Gets Its Name

The field officially came into being in the summer of 1956, when a young mathematician named John McCarthy organized a workshop at Dartmouth College in Hanover, New Hampshire. Together with Marvin Minsky, Nathaniel Rochester, and Claude Shannon, McCarthy proposed studying the conjecture that “every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.” It was in the proposal for this workshop that the term “Artificial Intelligence” was coined. The Dartmouth Conference brought together many of the researchers who would dominate the field for decades. It marked the moment AI ceased to be a scattered collection of ideas and became a recognized discipline with a name, an agenda, and a community.

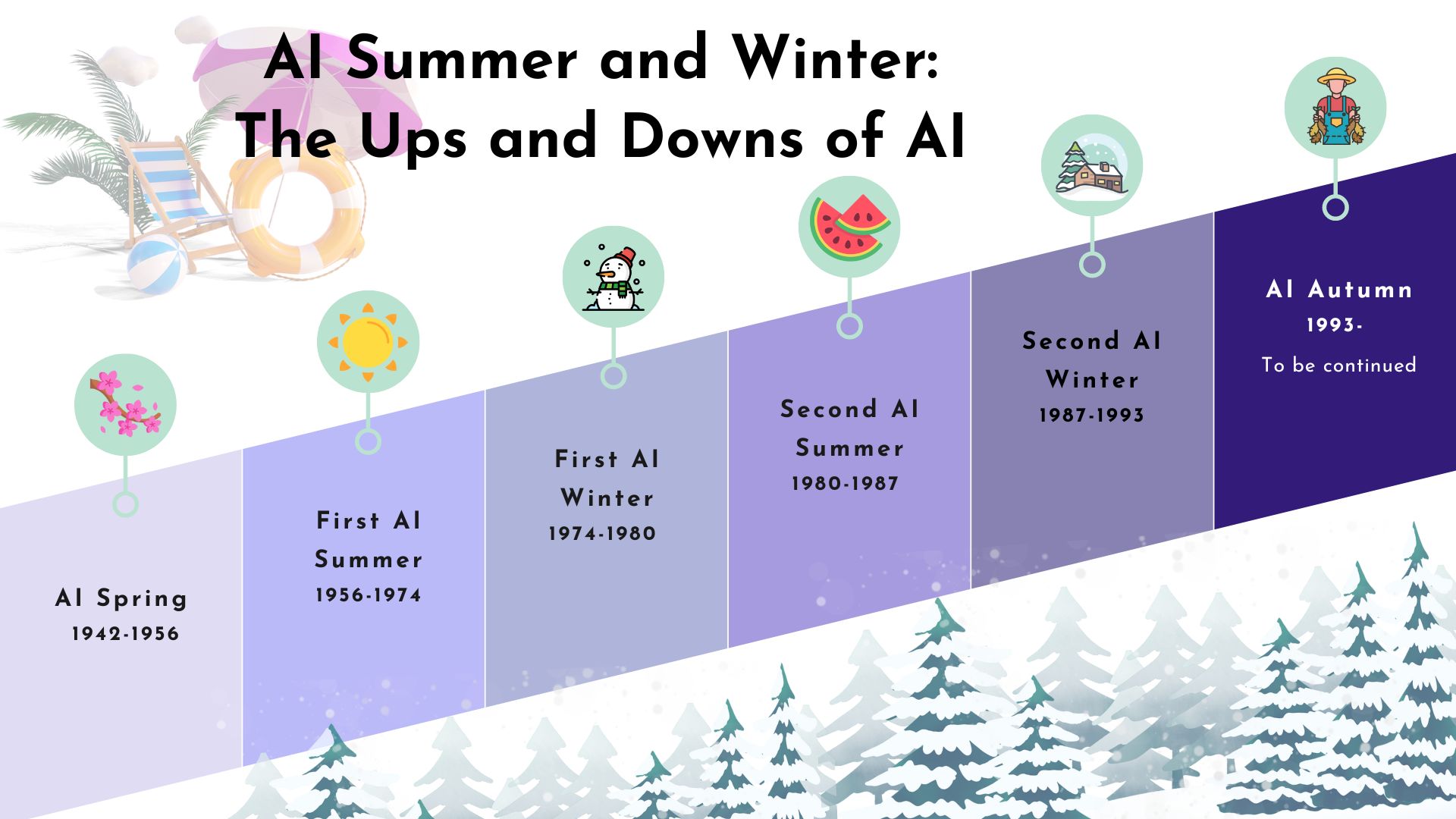

AI Summer and Winter

The First Summer: Optimism and Early Breakthroughs

The years following Dartmouth were electric with possibility. Funding flowed from government agencies, and researchers made rapid early progress. One notable achievement was ELIZA, a natural language processing program created by Joseph Weizenbaum at MIT in 1966. ELIZA simulated a Rogerian psychotherapist, reflecting users’ statements back at them as questions. It was remarkably simple in its design, yet people who interacted with it often became emotionally attached, confiding in the program as though it were a real therapist. ELIZA demonstrated — perhaps for the first time — that machines could create a convincing illusion of understanding, even without any actual comprehension.

The optimism of this era reached its peak when Marvin Minsky, by then one of the most prominent figures in AI, declared in 1970 that machines would achieve human-level intelligence “within three to eight years.” It was a bold claim, and it captured the mood of a field that believed its greatest challenges were essentially solved in principle, with only engineering details left to work out.

The First Winter: Lighthill, Congressional Criticism, and Funding Cuts

Reality intervened swiftly. In 1973, the British mathematician Sir James Lighthill published a devastating critique of AI research for the UK Science Research Council. Lighthill argued that the field had failed to deliver on its grand promises and that progress in AI was far more limited than its advocates admitted. His report led the British government to halt funding for AI research at all but a handful of universities. Across the Atlantic, similar skepticism was building. The United States Congress questioned the return on its AI investments, and funding from agencies like DARPA was sharply reduced. The first “AI winter” had arrived — a period defined not so much by a lack of ideas as by a painful gap between what researchers had promised and what they had actually delivered.

The Second Summer: Expert Systems and Commercial Interest

The chill did not last forever. In the early 1980s, a new approach called “expert systems” revived interest in AI. These programs encoded the knowledge of human specialists into large sets of if-then rules, enabling machines to make decisions in narrow domains such as medical diagnosis, mineral exploration, and financial analysis. Corporations rushed to build AI departments, and governments launched ambitious national programs. Japan’s Fifth Generation Computer Project, announced in 1982, aimed to create machines capable of reasoning, conversation, and knowledge processing. Investment soared, and for a time it seemed that AI was on the verge of transforming industry.

The Second Winter: Disillusionment Returns

But expert systems had fundamental weaknesses. They were brittle — they broke down when confronted with situations outside their pre-programmed rules. They were expensive to build and even more expensive to maintain. And as desktop personal computers grew more powerful and more affordable throughout the late 1980s, the specialized LISP machines that had underpinned much of the AI industry were rendered obsolete almost overnight. The expert systems market collapsed. Japan quietly wound down its Fifth Generation project in 1992, acknowledging that its most ambitious goals had not been met. The second AI winter set in, and once again the field retreated from public view, sustained only by a handful of determined researchers who continued their work in relative obscurity.

Looking Back, Looking Forward

The cyclical history of artificial intelligence is more than a curiosity. It is a reminder that technological progress rarely follows a straight line. Each spring and summer brought genuine breakthroughs — ideas and systems that advanced our understanding of intelligence and computation. Each winter, though painful, cleared away unrealistic expectations and forced the field to develop more rigorous methods. The researchers who persisted through the winters — refining algorithms, building better datasets, and waiting for hardware to catch up with theory — laid the foundations for the modern AI era that would follow. Understanding these seasons helps us approach today’s AI achievements with both the excitement they deserve and the measured perspective that history demands.

References: Haenlein & Kaplan (2019), “A Brief History of Artificial Intelligence”; Beckman (2002), “A Brief History of AI.”

Antony Lu

Contributor